At Airgradient, we have a Palas Fidas 200S regulatory grade, EU-approved reference instrument that we use for internal evaluation and calibration work. While we typically use this for internal purposes, having these capabilities in-house presents an opportunity for us to go beyond our own development needs and examine how some other widely used outdoor monitors perform.

Naturally, this made us curious and we decided to bring some third-party monitors into our existing test setup and evaluate them alongside the reference instrument under identical conditions to which we normally test our own monitors. The objective here was not to promote our own monitors, but to get a clearer, more transparent look at the comparative performance of other popular outdoor air quality monitors on the market.

For this reason, we chose not to include our own AirGradient monitors in this analysis. Since they are calibrated using this exact reference instrument, including them would not constitute a fair or independent comparison as these would be considered ideal conditions for our monitors, yet we don't know much at all about how the other devices are calibrated.

So, after a few weeks of testing, I am excited to present the results for two outdoor monitors that are frequently compared with the AirGradient Open Air: the PurpleAir Zen and the IQAir AirVisual Outdoor. The following sections outline how each device performed when evaluated against a regulatory-grade reference instrument - both indoors and out.

Indoor Comparison

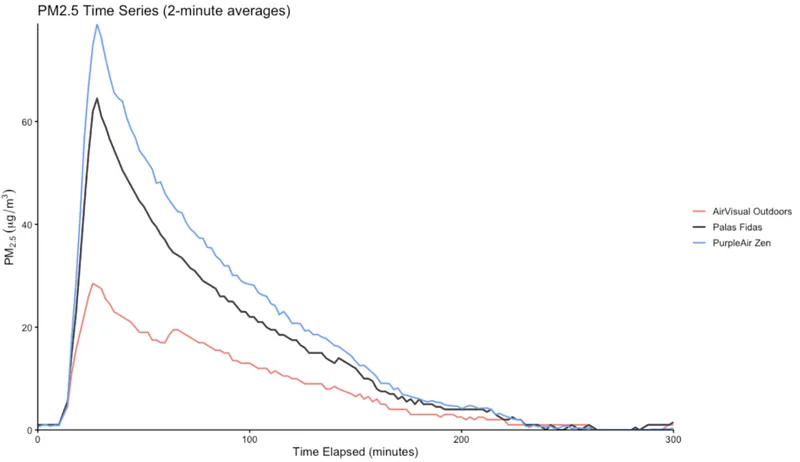

For the first test, we placed both the PurpleAir Zen and the AirVisual Outdoor in our test chamber for several days and put them through our standard testing procedure multiple times. The test is conducted in a controlled environment, where we introduce incense smoke to increase the particle concentration until it reaches a peak at 60µg/m³. From there, we allow the concentration to decrease naturally as particles settle. Each test cycle takes approximately three hours.

We repeated this test cycle five times, with all five runs showing the same overall behaviour from both devices. While the dataset is still relatively small, the consistency across cycles gives confidence that the observed trends are reproducible rather than incidental.

A couple of notes before looking at the result: Both the PurpleAir Zen and the AirVisual Outdoor use dual PM sensor configurations. The values shown here are the averages of the two sensors in each device. The graphs display 2-minute averages from each monitor, as we were unable to export per-minute data from the PurpleAir device. The data has not had any corrections applied.

In the first test, the PurpleAir Zen tracked the concentration recorded by the Palas quite closely, particularly at lower concentrations. At higher concentrations, however, it began to overreport relative to the reference. Since PurpleAir devices use Plantower PM sensors, this behaviour at elevated concentrations is well-documented and not unexpected.

By contrast, the AirVisual Outdoor underreported PM₂.₅ across nearly the entire test cycle. This result is also unsurprising given the characteristics of the test aerosol. The Palas has a lower effective particle size detection limit than the AirVisual Outdoor, and incense smoke is dominated by very fine particles. As a result, a portion of the mass detected by the Palas is effectively invisible to the two low-cost monitors.

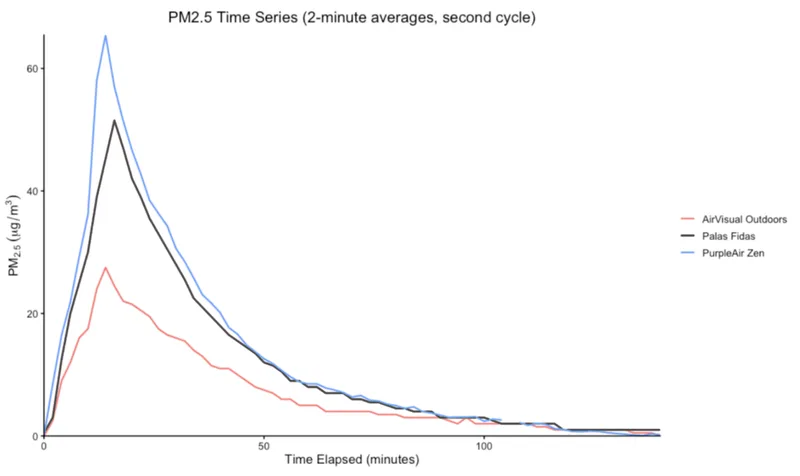

Below are the results from a second, identical test cycle, which show that these behaviours are reproducible.

Outdoor comparison

Based on the indoor results, my working hypothesis was that the AirVisual Outdoor’s weaker performance was largely driven by the particle type and size distribution used in the chamber test. With that in mind, I wanted to see how both monitors would perform under the conditions they’re actually designed for: outdoors.

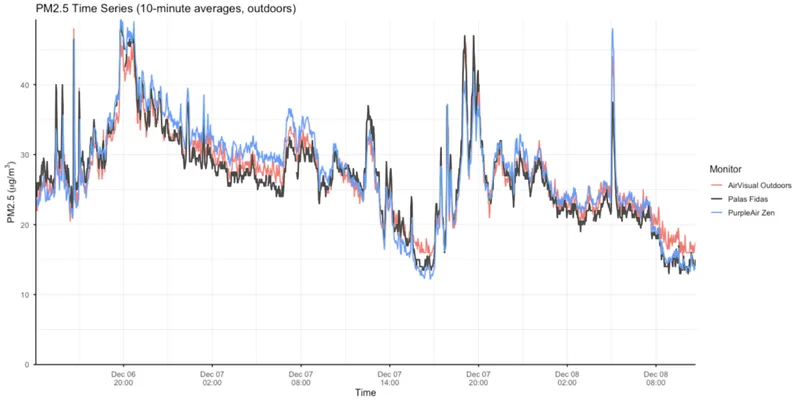

For this comparison, we deployed the Palas Fidas on the roof of our factory in Chiang Mai and ran all devices continuously over a weekend. While Chiang Mai had not yet entered the burning season, ambient PM₂.₅ concentrations were still relatively elevated, averaging around 25 µg/m³ for much of the test period. Unlike the controlled indoor test, the outdoor environment presented a far more heterogeneous mix of particle types and sizes, likely dominated by traffic emissions, and household burning and other typical urban sources.

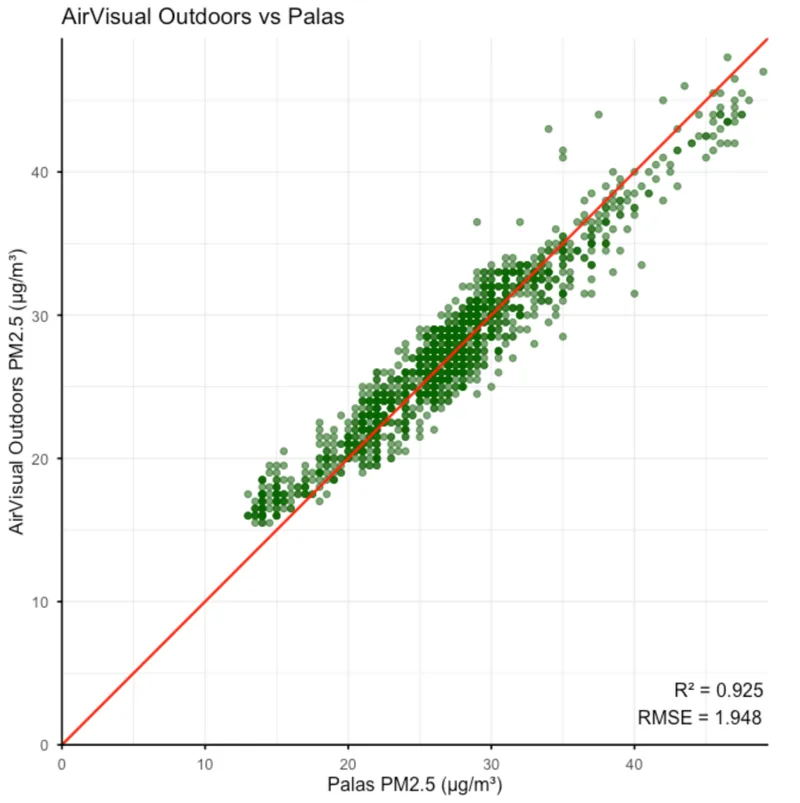

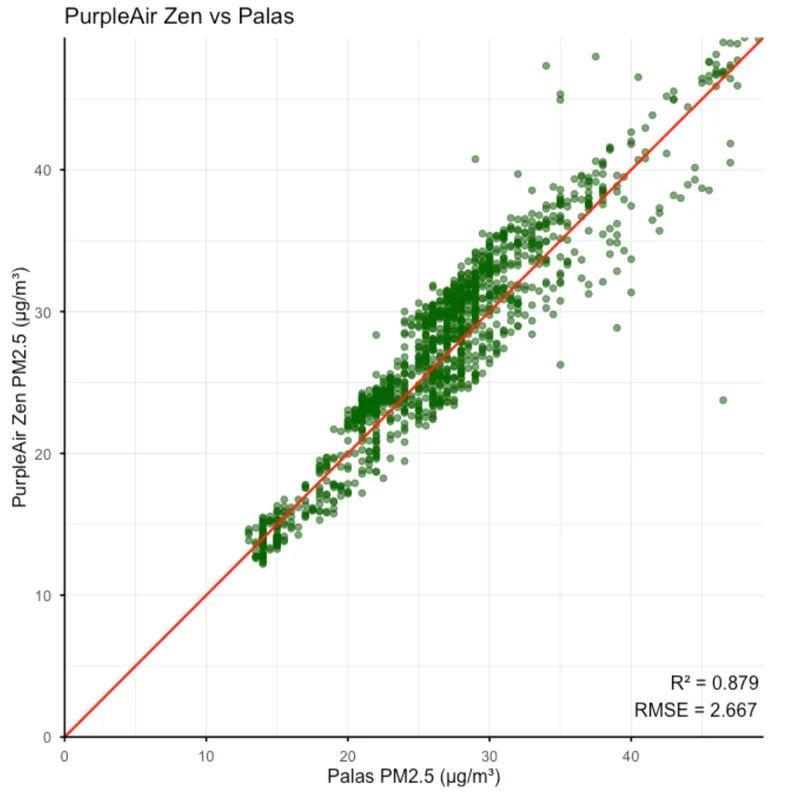

Because this test ran over a longer duration, I used 10-minute averages for all three monitors. As before, readings from both the PurpleAir Zen and the AirVisual Outdoor represent the average of their two internal PM sensors. Under these conditions, both devices performed very well and showed a strong correlation with the reference instrument.

The PurpleAir Zen achieved an R² of 0.88, while the AirVisual Outdoor achieved an R² of 0.92, both indicating a very strong correlation with the reference. RMSE values were also similar, at 2.26 µg/m³ for the PurpleAir Zen and 1.95 µg/m³ for the AirVisual Outdoor. One notable trend is that the PurpleAir Zen appears slightly noisier at higher concentrations, particularly above 35 µg/m³.

Overall, these results were impressive, and I was honestly a bit surprised by how well both monitors performed outdoors. Again, it’s also worth noting that all data shown here had no post-processing, corrections, or calibrations applied.

How Our Results Compare to Other Datasets

Almost a year ago, I wrote an article asking whether AQ-SPEC results alone are a good basis for purchasing decisions. The conclusion was no - not because these datasets lack value, but because monitor performance depends heavily on where and how a device is tested. Results are most meaningful when the test environment closely matches where a monitor will actually be deployed. With that said, I was curious to see if our results aligned with what other sources report.

For the AirVisual Outdoors, the most relevant external benchmark in this case is AIRLAB Thailand, which reflects conditions similar to our own testing. In AIRLAB’s Thai field deployment, the AirVisual Outdoors showed strong correlations with reference instruments (R² of 0.83 - 0.89). Our results showed a slightly higher but similar R² of 0.92, suggesting that the device performs particularly well in Thailand's weather conditions and that our findings are consistent with independent testing conducted in the same region.

PurpleAir has not yet been tested by AIRLAB, so a Thailand-specific comparison isn’t possible. However, the best available benchmarks come from AQ-SPEC and Afri-SET. AQ-SPEC testing of the PurpleAir Flex (which uses the same sensors) reports R² values in the 0.78 - 0.88 range, and our results sit at the upper end of that spread. Afri-SET’s more recent testing of the PurpleAir Flex in Ghana found strong correlations as well, particularly during the wet season.

Conclusion

Going into this testing, my main goal was simply to see how well other consumer-grade outdoor air quality monitors perform under real outdoor conditions. While I expected both devices to do reasonably well given the companies behind them and their reputations, I was genuinely surprised by just how strong the results were.

In our outdoor testing, both monitors showed very high agreement with reference instruments, with R² values around 0.9. For devices that are often described as “low-cost,” this level of correlation is impressive - not only relative to our ~$30,000 reference monitor, but also when compared with results from established third-party testing programmes. Performance will always depend on local conditions, particle composition, and calibration assumptions, but based on these results, I would be comfortable deploying and relying on either of these monitors in Thailand.

The air quality monitoring space is large enough to support multiple vendors and when competing products perform well, we think it’s important to say so. Transparent, reference-based testing benefits the entire ecosystem and ultimately leads to better tools and better data for everyone.